MIT released the 2022 annual review paper of Artificial Intelligence Accelerator, explaining in detail the performance advantages and disadvantages of 80+class AI chips

This paper updates the research progress of artificial intelligence accelerators and processors in the past three years, and collects and summarizes the commercial accelerators with peak performance and power consumption that have been publicly announced.

Performance and power values are plotted on a scatter plot, and multiple dimensions and observations of trends on the plot are discussed and analyzed again.

This year's paper contains two new trend charts based on the release date of the accelerator, as well as additional trends of some neural, photonic and memristor based inferred accelerators.

introduction

Just like last year, startups and established technology companies have been slow to release, release and deploy artificial intelligence (AI) and machine learning (ML) accelerators.

This is not unreasonable; For many companies that have published accelerator reports, they have spent three to four years researching, analyzing, designing, verifying and validating their accelerator design tradeoffs, and building a software stack for accelerator programming.

For those companies that have released subsequent versions of the accelerator, they report a shorter development cycle, although it is still at least two or three years.

The focus of these accelerators is still to accelerate the deep neural network (DNN) model. The application space ranges from very low power consumption embedded speech recognition and image classification to data center scale training. The competition in defining the market and application fields continues to be part of the larger industrial and technological transfer from modern computing to machine learning solutions.

AI ecosystem brings together the components of Embedded Computing (edge computing), traditional high performance computing (HPC) and high performance data analysis (HPDA). These components must work together to effectively provide the ability for decision makers, warfighters and analysts to use.

Figure 1 captures an architectural overview of this end-to-end AI solution and its components.

On the left side of Figure 1, structured and unstructured data sources provide different views of entities and/or phenomenology. These raw data products are sent into the data reconciliation step, in which they are fused, aggregated, structured, accumulated and transformed into information.

The information generated by the data adjustment step is input into a large number of supervised and unsupervised algorithms, such as neural networks, which extract patterns, predict new events, fill in missing data, or search for similarities in the data set, thus transforming the input information into operable knowledge.

Then the operable knowledge is transferred to human beings, so that the decision-making process can be carried out in the man-machine cooperation stage. The man-machine combination stage provides users with useful and relevant insight, and transforms knowledge into actionable intelligence or insight.

The modern computing system supports this system. The trend of Moore's Law has ended [2], and many related laws and trends have also ended, including Denar's ratio (power density), clock frequency, core count, instructions per clock cycle and instructions per joule (Coomi's Law) [3].

Drawing on the trend of System on Chip (SoC), it first appeared in automotive applications, robots and smartphones. Through the development and integration of accelerators for commonly used operating kernels, methods or functions, technological progress and innovation are still progressing. The design of these accelerators achieves a different balance between performance and functional flexibility. This includes the innovation explosion of deep machine learning processors and accelerators [4] - [8].

In this series of research papers, we will discuss the relative benefits of these technologies, because they are particularly important for applying AI to areas with significant limitations (such as size, weight, and power), whether in embedded applications or in the data center.

This paper is an update of IEEE-HPEC papers [9] - [11] in the past three years.

As in the past few years, this paper continues to focus on accelerators and processors last year, which are oriented to deep neural networks (DNNs) and convolutional neural networks (CNNs) because of their large amount of computation.

Because many reasons, including national defense and national security, AI/ML edge applications rely heavily on reasoning, this survey will focus on accelerators and processors for reasoning.

We will consider all numerical precision types supported by the accelerator, but for most of them, their best inference performance is int8 or fp16/bf16 (IEEE 16 bit floating point or Google 16 bit brain floating point).

There are many reviews [13] - [24] and other papers covering all aspects of AI accelerators.

For example, the first paper of this multi-year survey includes the peak performance of some AI model FPGAs; However, some of the above surveys cover FPGAs in depth, so they are not included in this overview.

This multi-year overview and the focus of this paper are to collect a comprehensive list of AI accelerators, their computing power, power efficiency, and finally use the computing efficiency of accelerators in embedded and data center applications.

With this emphasis, this paper mainly compares neural network accelerators as useful government and industrial sensors and data processing applications. Some accelerators and processors included in previous years' papers were excluded from this year's survey.

They are discarded because they are surpassed by new accelerators from the same company, they are no longer available, or they are no longer relevant to the theme.

Processor Overview

Many of the latest advances in AI can at least be attributed in part to the progress of computing hardware [6], [7], [25], [26], which makes machine learning algorithms with large amount of computation possible, especially dnn.

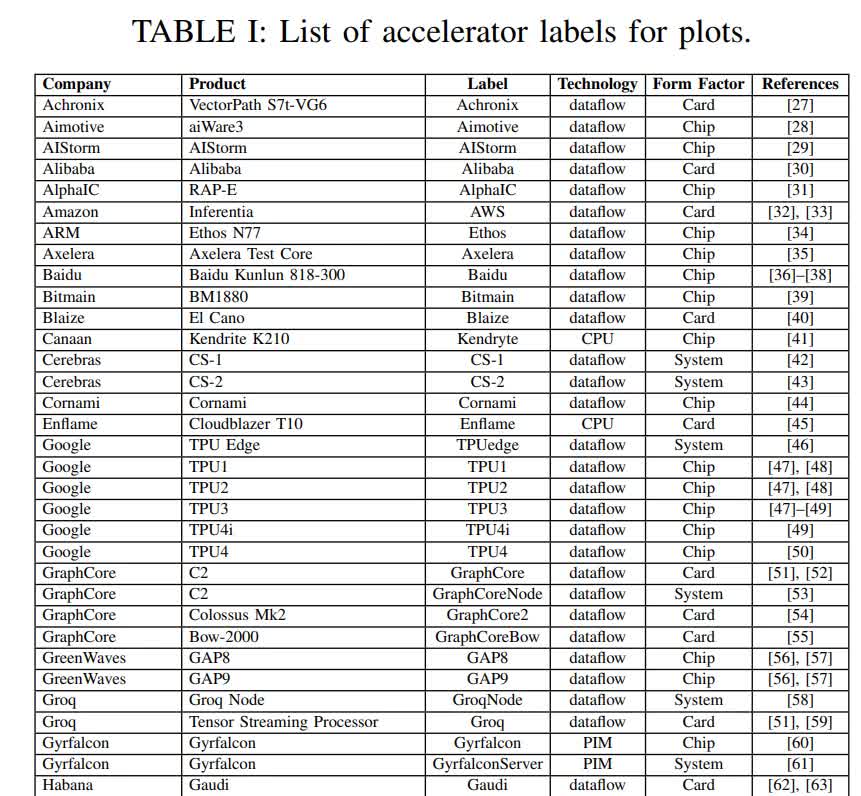

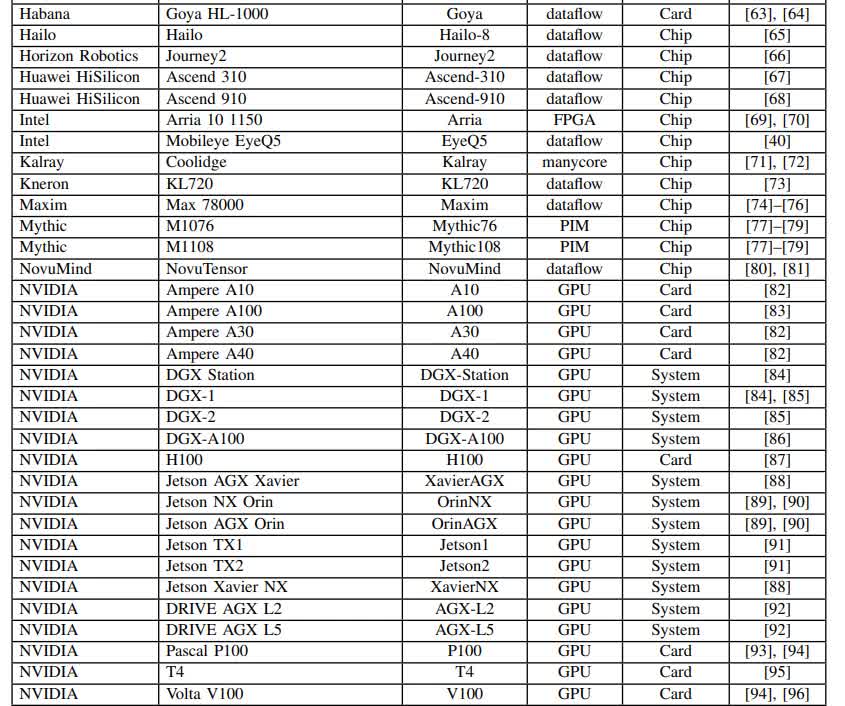

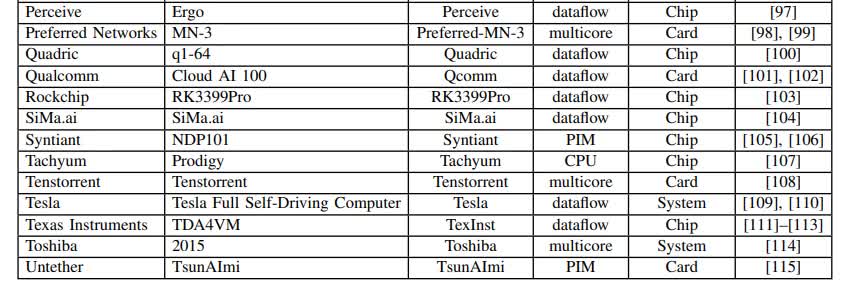

This survey collects performance and power information from public materials, including research papers, technical trade publications, company benchmarks, etc.

Although there are many ways to obtain information about companies and start-ups (including those in the silent period), such information is intentionally excluded from this survey; When these data are published, they will be included in this survey.

The key indicators of these public data are shown in Figure 2, which plots the latest processor capacity (as of July 2022) and maps the relationship between peak performance and power consumption. The dotted box describes the very dense area that was enlarged and drawn in Figure 3.

Observation and trend

Int8 continues to be the default numerical precision for embedded, autonomous, and datacenter reasoning applications. This precision is sufficient for most AI/ML applications with a reasonable number of classes. However, some accelerators also use fp16 and/or bf16 for inference. For training, it becomes an integer representation.

In this category and embedded category, it is very common to publish system on chip (SoC) solutions, which usually include low-power CPU core, audio and video analog digital converter (adc), encryption engine, network interface, etc. These additional features of soc will not change the peak performance indicators, but they have a direct impact on the reported peak power of the chip, so please keep this in mind when comparing them.

The embedded part does not change much, which may mean that the computing performance and peak power are sufficient to meet the application types in this field.

In the autonomous and data center chip and card fields, the density becomes very crowded, which needs to be magnified in Figure 3. In the past few years, several embedded computing microelectronics companies, including Texas Instruments, have released AI accelerators, while NVIDIA has also released and announced several more powerful automotive and robot applications. In the data center card, in order to break through the power limit of PCIe v4 300W, the PCIe v5 specification is highly anticipated.

Finally, high-end training systems not only released impressive performance data, but also announced highly scalable connectivity technologies that can connect thousands of cards together. This is particularly important for data flow accelerators such as Cerebras, GraphCore, Groq, Tesla Dojo, and SambaNova. These accelerators are explicitly/statically programmed or "placed and routed" to computing hardware. It enables these accelerators to adapt to very large models, such as transformer [129].